Enterprise Technical SEO Audit: The Forensic Framework That Turns Technical Debt Into Traffic Recovery

Your enterprise site is a black box. You’re managing 50,000+ pages, JavaScript frameworks, multiple subdomains, and a replatforming project on the horizon. Crawl budget is bleeding. Core Web Vitals are trending red. And you can’t prove which technical issues are actually costing you traffic.

Here’s what makes it worse: Google just confirmed something most SEO teams have never accounted for.

Table of Contents

- Google's 2MB Crawl Limit: Why Code Order Is Now an SEO Priority

- Why Standard Enterprise Audits Fail (And What Actually Works)

- The Enterprise Forensic Audit Framework

- Prioritization and Roadmapping: From Audit to Action

- Implementation Timeline: 30-60-90 Day Remediation Plan

- Measuring Success: KPIs That Matter

- When to Bring in Forensic Audit Expertise

- Migration Protection Blueprint

- Frequently Asked Questions

- The Bottom Line

Google’s 2MB Crawl Limit: Why Code Order Is Now an SEO Priority

In March 2026, Google’s Gary Illyes published “Inside Googlebot: demystifying crawling, fetching, and the bytes we process” – the most detailed public breakdown of Google’s crawling infrastructure in years. The revelations have direct implications for every enterprise technical SEO audit.

The hard limits you need to know (source: Google Search Central):

Googlebot fetches a maximum of 2MB per URL, including HTTP headers. Everything beyond that cutoff is ignored. Not fetched. Not rendered. Not indexed. For enterprise sites running bloated templates with inline CSS, base64-encoded images, or megabytes of navigation markup, this means your actual content – and your critical SEO signals – might be invisible to Google.

Here’s what this means in practice:

- Partial fetching is silent. Google doesn’t reject oversized pages. It stops downloading at exactly 2MB and treats whatever it captured as the complete file. You’ll never get an error message. Your content just disappears.

- External resources get separate budgets. CSS and JavaScript files referenced in your HTML are fetched independently, each with their own 2MB limit. They don’t count against the parent page’s budget. This is why moving heavy scripts and styles to external files matters.

- The Web Rendering Service is stateless. Google’s WRS clears local storage and session data between every request. If your content depends on cookies, session state, or authentication tokens to render, Google can’t see it. Period.

- PDFs get 64MB. Other crawlers that don’t specify limits default to 15MB. But the HTML crawler that determines your organic rankings? 2MB, including headers.

The audit implication: Every enterprise audit must now include HTML weight analysis by template type. Your meta tags, title elements, canonical tags, and structured data need to appear as high in the document as possible. If they’re buried below the 2MB cutoff by bloated navigation, inline scripts, or embedded SVG, Google doesn’t know they exist.

This isn’t theoretical. Enterprise sites running WordPress with 15 plugins, React apps with server-side rendering that injects massive state objects, or ecommerce platforms with mega-menus containing thousands of links – these routinely push critical elements past the threshold.

Byte-level best practices from Google’s own guidance:

- Keep HTML lean. Move CSS and JavaScript to external files.

- Place meta tags, titles, canonicals, and structured data near the top of the document.

- Monitor server response times. Slow servers cause Google’s crawlers to throttle back automatically, reducing crawl frequency.

- Audit inline content that inflates page weight – base64 images, inline SVG, embedded JSON-LD blocks with excessive data, and massive navigation structures.

This context frames everything else in this audit framework. Code order and byte efficiency aren’t nice-to-haves. They determine whether Google even sees your most important SEO signals.

Why Standard Enterprise Audits Fail (And What Actually Works)

A basic technical SEO audit won’t cut it at enterprise scale. Those 30-point checklists from agencies? They catch the obvious stuff – missing meta descriptions, broken links, slow load times. But they completely miss the forensic-level issues that tank enterprise sites.

The gap between a standard audit and an enterprise forensic audit is the difference between finding symptoms and diagnosing root causes. One tells you “Core Web Vitals need improvement.” The other tells you exactly which third-party scripts are blocking render, which templates are generating the delays, and provides sprint-ready tickets for your engineering team with measurable KPIs.

Enterprise sites have constraints that demand different approaches:

- Legacy technical debt: Multiple CMS platforms, deprecated code, and architectural decisions made years ago by teams long gone

- Governance complexity: Changes require approval from security, legal, engineering, product, and marketing – each with different priorities and timelines

- Scale multiplication: Issues that are minor annoyances on 500-page sites become catastrophic at 500,000 pages

- Migration risk: Replatforming a 100,000-page ecommerce site isn’t the same as moving a brochure site to a new theme

The standard audit approach – run Screaming Frog, export to Excel, hand over a 200-row spreadsheet – creates three problems:

- No prioritization framework: Everything looks equally important, so nothing gets fixed

- No business context: Technical issues aren’t mapped to revenue impact or user experience degradation

- No implementation path: Developers get vague recommendations like “improve site speed” instead of specific, actionable tickets

What changes outcomes is a forensic methodology that quantifies every issue’s impact on crawl efficiency, indexation coverage, and user experience, then maps it to effort and risk. You need a framework that turns “duplicate content detected” into “faceted navigation generating 47,000 duplicate URLs consuming 34% of crawl budget – estimated traffic recovery: 12-18% within 60 days post-fix.”

That’s the difference between an audit that sits in a folder and one that drives actual remediation. For hands-on support, explore our enterprise technical SEO audit services.

The Enterprise Forensic Audit Framework

A comprehensive enterprise audit isn’t a single pass with a crawler. It’s a systematic investigation across interconnected domains, each revealing how technical issues compound to suppress visibility and waste resources.

Phase 1: Discovery, Access, and Objectives Mapping

Before analyzing anything, you need the complete technical landscape and stakeholder map.

Critical access requirements:

- Google Search Console (all properties and subdomains)

- Google Analytics or equivalent with historical data (minimum 12 months)

- Raw server log files (minimum 30 days, ideally 90)

- CDN access and configuration documentation (Cloudflare, Akamai, Fastly, or equivalent)

- Staging/development environment access

- Current sitemap architecture and generation logic

- CI/CD pipeline documentation and deployment schedules

- Existing crawl budget and rate limit configurations

Governance mapping: Identify who owns what. Who approves robots.txt changes? Who controls CDN configuration? Who can deploy schema updates? Who owns the CI/CD pipeline? Without a clear RACI matrix, even perfect recommendations die in approval limbo.

KPI baseline: Establish current performance across organic traffic, indexation coverage, crawl efficiency, Core Web Vitals percentiles, and conversion rates by template type. You can’t measure improvement without knowing where you started.

Phase 2: Crawl, Render, and Byte-Level Diagnostics

This is where forensic audits diverge from basic ones. Standard audits run a crawler and report what they find. Forensic audits analyze what Google actually crawls, renders, and processes versus what you think you’re serving.

HTML weight analysis (new critical step):

Given Google’s confirmed 2MB fetch limit, every audit must now measure HTML payload size by template type. Run this analysis across your major templates:

- Homepage, category pages, product/service pages, blog posts, landing pages

- Measure total HTML size including server-rendered content

- Identify what percentage of the document is consumed by navigation, inline styles, inline scripts, and non-content markup

- Map where critical SEO elements (title, meta description, canonical, hreflang, structured data) appear relative to the 2MB threshold

- Flag templates where bloat could push content below the cutoff

For enterprise WordPress sites, themes with 15-20 plugins can inject hundreds of KB of inline CSS and JS before your content even begins. For React/Next.js apps, server-side state hydration objects can consume significant portions of the HTML budget. For ecommerce platforms, mega-menus with thousands of category links create massive navigation blocks.

Log file analysis workflow:

Server logs reveal the truth about how search engines interact with your site. Here’s what to extract:

- Crawl budget allocation: Which sections get crawled most frequently? Are high-value pages being crawled daily while low-value faceted URLs consume the majority of Googlebot’s attention?

- Bot segmentation: Don’t treat “Googlebot” as monolithic. Segment by Googlebot Desktop, Googlebot Smartphone, Googlebot Image, Googlebot Video, and AI crawlers (GPTBot, ClaudeBot, Bytespider, PerplexityBot). Each has different crawl patterns and priorities. Understanding AI crawler behavior is increasingly important for AI visibility optimization.

- Orphaned pages: Pages receiving organic traffic but never crawled because they’re missing from your internal linking structure

- Blocked resources: JavaScript, CSS, or image files blocked by robots.txt that prevent proper rendering

- Status code patterns: 404s that should be 301s, soft 404s masquerading as 200s, redirect chains wasting crawl budget

- Bot behavior anomalies: Sudden crawl rate changes, specific user-agent targeting, or crawler traps

- Server response time by bot: If your server is slow to deliver bytes, Google’s crawlers automatically throttle back, reducing crawl frequency. This is a silent traffic killer.

JavaScript rendering validation:

Enterprise sites increasingly rely on JavaScript frameworks (React, Next.js, Vue, Angular). Standard crawlers see the initial HTML. Google’s Web Rendering Service sees what loads after JavaScript executes. The gap between these two views is where products disappear from indexation.

Remember: WRS is stateless. It clears local storage and session data between every request. If your single-page application stores routing state in localStorage, or your product recommendations depend on session cookies, WRS can’t access any of it.

The rendering budget problem: Separate from crawl budget. Google has finite rendering resources, and the queue between crawling and rendering can delay indexation by days or weeks. For JS-heavy enterprise sites, this means newly published content might not appear in search results for an extended period – not because it wasn’t crawled, but because it’s waiting in the rendering queue.

SSR vs. Dynamic Rendering vs. Pre-rendering decision framework:

| Approach | Best For | Trade-offs |

|---|---|---|

| Server-Side Rendering (SSR) | Content-heavy sites, SEO-critical pages | Higher server costs, more complex infrastructure |

| Dynamic Rendering | Large JS apps where SSR refactoring is impractical | Maintenance burden, risk of content parity issues |

| Pre-rendering / Static Site Generation | Content that changes infrequently | Build times at scale, stale content risk |

| Hybrid (ISR/streaming SSR) | Enterprise sites with mixed content types | Complexity, requires mature DevOps |

Test critical templates in Google Search Console‘s URL Inspection tool and compare rendered HTML against your crawler’s view. Look for:

- Products or content only visible after JavaScript execution

- Navigation elements that don’t exist in the initial HTML

- Lazy-loaded content below the fold that never triggers for crawlers

- Client-side redirects that crawlers don’t follow

- State-dependent content that requires cookies or localStorage

Crawl efficiency metrics to track:

- Crawl to index ratio (pages crawled vs. pages indexed)

- Render success rate (pages successfully rendered vs. attempted)

- Crawl waste percentage (low-value URLs consuming crawl budget)

- Average crawl depth to reach priority pages

- Time-to-index for new content (measuring rendering queue delay)

Phase 3: Site Architecture and Internal Linking

Poor architecture compounds every other technical issue. Deep page depth means new content takes weeks to get discovered. Weak internal linking means authority doesn’t flow to conversion pages. Orphaned sections mean entire product categories never get crawled.

Architecture audit components:

- Page depth analysis: How many clicks from the homepage to reach key conversion pages? Enterprise sites often bury important pages 6-8 clicks deep. Google’s crawler gives up long before that.

- Hub and spoke structure: Are category pages properly architected as hubs that distribute authority to individual product/article pages?

- Pagination strategy: How do you handle large category pages? Infinite scroll, load more buttons, or traditional pagination? Each has different crawlability implications.

- Sitemap architecture: Do you have a single massive XML sitemap or a logical hierarchy? Are sitemaps segmented by change frequency and priority?

- Faceted navigation: The biggest crawl budget killer on ecommerce and SaaS sites. How many parameter combinations can generate unique URLs? What’s your canonicalization strategy?

Internal linking assessment:

Run a link graph analysis to identify:

- Pages with zero internal links (orphans)

- Pages with excessive outbound links (link hoarders that dilute authority)

- Broken internal links by volume and location

- Strategic pages that should receive more internal links based on business priority

- Navigation patterns that create crawler traps or circular link structures

Create an internal linking priority matrix mapping business value against current internal link equity. Your highest-priority conversion pages should receive proportional internal linking support. For guidance on content-driven linking strategy, see our content strategy services.

Phase 4: Indexation, Canonicalization, and Index Bloat

Having 500,000 pages doesn’t matter if Google’s only indexing 200,000 – or worse, indexing 700,000 because of duplication and bloat issues.

Index bloat diagnostics:

Index bloat is one of the most damaging and underdiagnosed enterprise issues. It happens when your index contains far more URLs than intended, diluting crawl budget and authority across pages that provide no search value.

Common index bloat sources:

- Faceted navigation URLs: Filter and sort combinations generating millions of indexable parameter variations

- Internal search results pages: Site search queries creating indexable URLs

- Tag and filter combinations: Category + tag + price range + color + size = exponential URL generation

- Print-friendly and PDF duplicate versions: Separate URLs serving the same content in different formats

- Session IDs and tracking parameters: UTM parameters, session tokens, and A/B test variants creating duplicate indexable URLs

- Paginated archives: Infinite pagination of blog, news, or product archives

Indexation coverage analysis:

Compare your intended indexable page count against what’s actually in Google’s index:

- Coverage report deep dive: Use Google Search Console‘s coverage report to categorize excluded pages: crawled but not indexed, discovered but not crawled, blocked by robots.txt, redirect errors, soft 404s

- GSC API automation: At enterprise scale, manual GSC review is impractical. Automate extraction via the Google Search Console API for indexation monitoring, query performance tracking, and anomaly detection. Set up daily pulls for indexation coverage and alerts for sudden drops.

- Duplication classification: Identify duplication sources – parameter variations, HTTP vs HTTPS, www vs non-www, trailing slash inconsistencies, printer-friendly versions, session IDs, tracking parameters

- Noindex/nofollow audit: Verify that noindex tags are intentional and properly implemented. Check for accidental noindex on priority pages after deployments

- Canonical logic validation: Test canonical implementation across templates. Are self-referencing canonicals in place? Are cross-domain canonicals properly configured for syndicated content?

Soft 404 detection methodology:

Soft 404s deserve focused attention. These are pages returning a 200 status code but displaying empty, error, or placeholder content. Google’s detection is imperfect, and manual validation methodology matters.

Steps for comprehensive soft 404 detection:

- Crawl the site and capture response codes, page titles, and word counts

- Identify pages with abnormally low word counts relative to their template type

- Cross-reference with GSC coverage report for pages flagged as soft 404s

- Check for pages returning 200 but displaying “product not found,” “page not available,” or empty content containers

- Validate against internal database to identify discontinued products or removed content that should return proper 404/410 or redirect

Common enterprise indexation issues:

- Staging or development environments leaking into production via improper canonical tags or missing noindex

- Faceted navigation generating millions of indexable parameter combinations

- International sites with duplicate content across language versions without proper hreflang

- Product pages with minor variations (color, size) creating near-duplicates

- Archive or historical content without proper noindex implementation

Phase 5: Internationalization and Hreflang

If you operate in multiple countries or languages, hreflang implementation is where most enterprise sites fail quietly. Users in Germany get English content. Search engines index the wrong language version. Organic traffic goes to the wrong regional site.

Hreflang audit checklist:

- Implementation method: Are you using HTML tags, XML sitemaps, or HTTP headers? Each has different validation requirements. The method should match your architecture – HTTP headers work best for non-HTML resources, XML sitemaps for massive implementations, HTML tags for smaller sets.

- Bidirectional validation: Every hreflang annotation must be reciprocal. If your US page points to your UK page, your UK page must point back to your US page.

- Language-region mapping: Verify you’re using correct ISO codes (en-US, en-GB, es-MX – not just en or es)

- X-default specification: Do you have a default page for users whose language/region doesn’t match any specific version?

- Self-referencing requirement: Each page must include a self-referencing hreflang tag

- Canonical-hreflang alignment: Canonical tags must not conflict with hreflang annotations. A canonical pointing to a different region than hreflang creates contradictory signals.

Common hreflang errors:

- Missing return tags (US page points to UK, but UK doesn’t point back)

- Incorrect language codes (using en-UK instead of en-GB)

- Canonical and hreflang conflicts

- Hreflang pointing to redirected or non-canonical URLs

- Missing hreflang for all language versions (if you have 5 language versions, each page needs 5 hreflang tags plus self-reference)

- Hreflang annotations pushed below the 2MB HTML cutoff on template-heavy pages

Validate implementation using Google Search Console‘s International Targeting report. A single implementation error can cascade across thousands of pages.

Phase 6: Core Web Vitals – Beyond “Make It Faster”

Google’s Core Web Vitals are ranking factors and user experience metrics that directly correlate with conversion rates.

Current Core Web Vitals thresholds (2026):

| Metric | Good | Needs Improvement | Poor | Common Enterprise Causes |

|---|---|---|---|---|

| Largest Contentful Paint (LCP) | < 2.5s | 2.5-4.0s | > 4.0s | Unoptimized hero images, render-blocking resources, slow TTFB, CDN misconfigurations |

| Interaction to Next Paint (INP) | < 200ms | 200-500ms | > 500ms | Heavy JavaScript execution, third-party scripts blocking main thread, long tasks, event handler complexity |

| Cumulative Layout Shift (CLS) | < 0.1 | 0.1-0.25 | > 0.25 | Images without dimensions, late-loading ads/embeds, web fonts causing reflow, dynamic content injection |

Note: Google replaced First Input Delay (FID) with Interaction to Next Paint (INP) as a Core Web Vital in March 2024. INP measures responsiveness across the entire page lifecycle, not just the first interaction. If your audit framework still references FID, it’s outdated.

Field vs. lab data distinction:

- Field data (Chrome UX Report): Real user measurements from actual Chrome browsers. This is what Google uses for rankings.

- Lab data (Lighthouse, PageSpeed Insights): Simulated tests in controlled environments. Useful for diagnosis but not what Google ranks on.

Your audit must analyze both. Lab data tells you what’s possible. Field data tells you what real users experience.

Origin vs. URL-level performance:

Enterprise sites often pass CWV at the origin level (site-wide aggregate) but fail at specific URL group levels. A fast homepage and blog can mask catastrophically slow product pages. Always segment CWV data by URL group and template type – this is where actionable insights live.

Template-level analysis: Don’t just look at site-wide averages. Segment by template type (homepage, category pages, product pages, blog posts). Often, one template type drags down overall performance while others perform well.

Third-party script audit: Enterprise sites typically load 20-40 third-party scripts (analytics, marketing tags, chat widgets, A/B testing tools, consent management). Each one impacts INP and LCP. Audit which scripts are business-critical vs. nice-to-have, and implement proper loading strategies (defer, async, lazy load after interaction).

Phase 7: Edge SEO and CDN-Layer Optimization

Modern enterprise SEO increasingly happens at the edge – the CDN layer between your origin server and the user’s browser. This is one of the most powerful and underutilized tools in the enterprise SEO arsenal.

What edge SEO enables:

- SEO fixes without code deployments: Implement redirects, inject hreflang tags, modify meta tags, and add structured data at the CDN layer using Cloudflare Workers, Akamai EdgeWorkers, or Fastly VCL – without touching the application code or waiting for a development sprint.

- Performance optimization: Server-side rendering at the edge, HTML minification, image optimization, and response header management.

- Bot-specific handling: Serve pre-rendered pages to search engine crawlers while serving the full JavaScript app to users. This is dynamic rendering implemented at the infrastructure level.

- A/B testing for SEO: Test title tags, meta descriptions, or structured data variations without application-level changes.

Why this matters for enterprise audits:

Many enterprise sites have deployment cycles measured in weeks or months. Edge SEO provides a way to implement critical fixes in hours. Your audit should assess CDN capabilities and recommend edge-based solutions for high-priority fixes that can’t wait for the next development sprint.

Phase 8: Structured Data and SERP Features

Structured data markup (Schema.org) communicates with search engines about what your content represents. Proper implementation unlocks rich results, knowledge panels, and enhanced SERP features that increase click-through rates.

Enterprise structured data audit:

- Coverage analysis: Which templates have structured data? Which are missing opportunities?

- Schema type validation: Are you using the most specific schema types for your content? Product schema for products, not just generic WebPage.

- Required vs. recommended properties: Are you including only required properties or also recommended ones that unlock enhanced features?

- Nested schema implementation: Complex pages (product pages with reviews, recipes with nutrition info) require nested schema structures

- JSON-LD placement: Given the 2MB HTML limit, verify JSON-LD blocks appear early in the document. Large product catalog schemas with extensive offer data can consume significant bytes.

Rich result eligibility testing:

Use Google’s Rich Results Test to validate Product, Review, FAQ, HowTo, Event, and Organization schema types. Each has specific required properties that determine eligibility.

Common enterprise schema errors:

- Outdated schema types (using deprecated types instead of current recommendations)

- Missing required properties (Product schema without price or availability)

- Schema-content mismatch (schema claims 5-star rating but page shows 3 stars)

- Duplicate schema (same schema implemented multiple times on one page)

- Schema on non-indexable pages (wasted implementation effort)

- Massive JSON-LD blocks consuming HTML budget when placed inline

Phase 9: Headless CMS and Modern Architecture Considerations

Enterprise sites increasingly run headless architectures (Contentful, Strapi, Sanity, headless Shopify, commercetools). The SEO implications differ significantly from traditional CMS setups.

Headless architecture audit points:

- Content delivery: How does content get from the headless CMS to the rendered page? Is there a build step, or is content fetched client-side?

- URL management: Who controls URL structure – the CMS, the frontend framework, or a routing layer? Misalignment creates canonicalization problems.

- Preview and staging: Can content editors preview how pages will look to search engines before publishing?

- Sitemap generation: Is sitemap generation automated and does it reflect the actual frontend URLs (not CMS-internal paths)?

- Structured data injection: Where does schema markup get added – in the CMS content model, the frontend template, or via edge workers?

For enterprise sites running decoupled architectures, the audit must trace the content pipeline from CMS to CDN to rendered output, identifying where SEO signals can be lost or misconfigured at each layer.

Phase 10: Security, Compliance, and Technical Hygiene

Security headers and technical compliance don’t directly improve rankings, but their absence can tank your site or expose you to penalties.

Security header audit:

- HTTPS implementation with valid certificates across all subdomains

- HSTS (HTTP Strict Transport Security) preventing protocol downgrade attacks

- Content Security Policy mitigating XSS and unauthorized script execution

- X-Frame-Options preventing clickjacking

- Referrer-Policy controlling referrer information sent with requests

Robots directives validation:

- Robots.txt accuracy: Are you blocking resources Google needs to render pages?

- Meta robots consistency: Verify noindex, nofollow, and other directives match intent

- X-Robots-Tag headers properly configured at the server level

- Sensitive path protection: Admin panels, development environments, and staging sites properly restricted

Technical compliance:

- Mobile-friendliness: Responsive design, viewport configuration, touch target sizing

- Accessibility basics: Alt text coverage, heading hierarchy, ARIA labels – these overlap with SEO best practices

- Legal compliance: Cookie consent, privacy policy accessibility, GDPR/CCPA signals

Phase 11: Backlink Risk and Opportunity Assessment

A forensic audit includes a backlink health check because toxic backlinks can trigger manual actions that override all your technical optimizations.

Backlink risk analysis:

- Toxic link clusters from link farms, PBNs, or spammy directories

- Anchor text over-optimization with unnatural exact-match concentration

- Sudden backlink spikes suggesting negative SEO

- Disavow file review for currency and completeness

Link reclamation opportunities:

- Broken backlinks: External sites linking to your 404 pages (redirect opportunities)

- Unlinked brand mentions: Sites mentioning your brand without linking

- Competitor backlink gaps: High-authority links pointing to competitors but not to you

- Lost links: Previously strong backlinks that have been removed or changed

For deeper analysis, explore our backlink analysis and risk assessment services. If you’ve been impacted by manual actions, our Google penalty removal services can accelerate recovery.

Phase 12: Analytics, Tracking, and CI/CD Integration

You can’t optimize what you can’t measure. Enterprise sites often have tracking gaps, misconfigured events, or incomplete instrumentation that makes it impossible to prove SEO ROI.

Analytics audit components:

- Tracking parity: Does your analytics platform accurately capture organic traffic, conversions, and user behavior?

- Event taxonomy: Are custom events properly configured to track key user actions?

- Cross-domain tracking: For multi-domain properties, is tracking configured to follow users across domains?

- Organic traffic segmentation: Can you separate branded vs. non-branded organic traffic?

- Search query attribution: Are you capturing search queries that drive conversions?

CI/CD pipeline integration:

Enterprise SEO fixes go through CI/CD pipelines, staging environments, and QA processes. Your audit should assess how SEO validation integrates into deployment workflows:

- Pre-deploy SEO checks: Automated validation of title tags, canonical tags, meta robots, structured data, and hreflang before code reaches production

- Regression testing: Automated tests that catch SEO-breaking changes (accidental noindex, canonical changes, redirect removals)

- Monitoring alerts: Real-time alerts for sudden indexation drops, crawl error spikes, or Core Web Vitals degradation after deployments

- Rollback triggers: Predefined criteria for reverting deployments that negatively impact organic performance

Dashboard and reporting infrastructure:

Create executive-friendly dashboards connecting technical metrics to business outcomes using tools like Reportz.io for automated, live reporting:

- Traffic impact dashboard with year-over-year comparisons

- Indexation health dashboard with crawl efficiency metrics

- Performance dashboard with CWV percentiles by template

- Conversion impact dashboard with revenue attribution

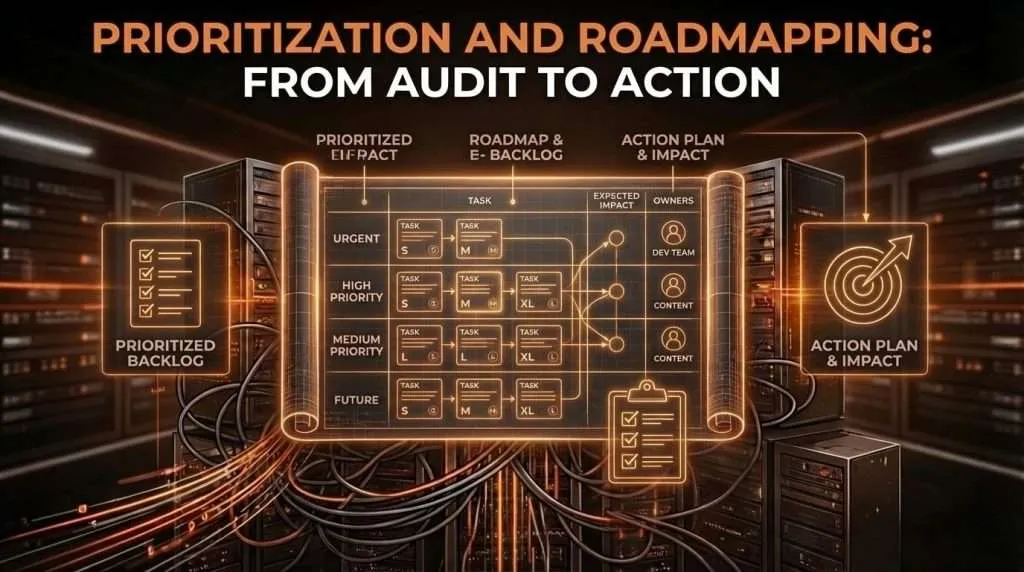

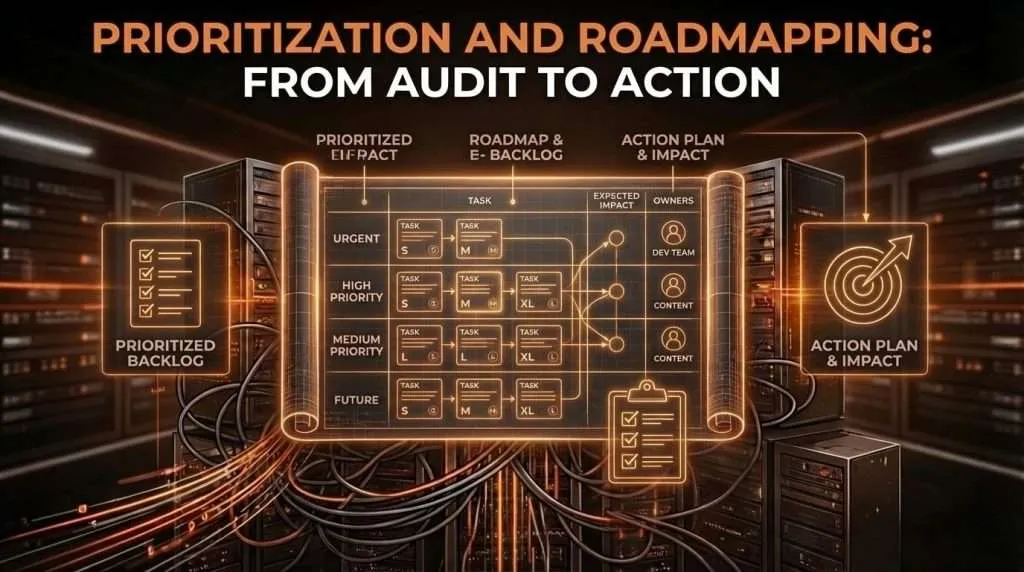

Prioritization and Roadmapping: From Audit to Action

The audit deliverable that matters most isn’t a 200-page report. It’s the prioritized backlog with clear owners, effort estimates, and expected impact.

Impact x Risk / Effort prioritization matrix:

| Issue Category | Impact (1-10) | Effort (1-10) | Risk (1-10) | Priority Score | Sprint |

|---|---|---|---|---|---|

| HTML bloat / byte-order optimization | 8 | 3 | 6 | 16 | Sprint 1 |

| Faceted navigation canonical fix | 9 | 3 | 8 | 24 | Sprint 1 |

| Product schema implementation | 7 | 4 | 2 | 3.5 | Sprint 1 |

| Core Web Vitals – LCP/INP optimization | 8 | 6 | 4 | 5.3 | Sprint 2 |

| Hreflang implementation fix | 6 | 5 | 7 | 8.4 | Sprint 2 |

| Internal linking architecture | 7 | 7 | 3 | 3 | Sprint 3 |

| Edge SEO implementation | 7 | 4 | 3 | 5.25 | Sprint 1 |

| Index bloat remediation | 8 | 5 | 5 | 8 | Sprint 2 |

Priority score calculation: (Impact x Risk) / Effort

This formula surfaces quick wins (high impact, low effort) and critical risks (high impact, high risk) while deprioritizing low-impact changes regardless of effort.

Sprint-ready ticket template:

Each issue needs a developer-friendly ticket with:

- Title: Clear, specific description of the issue

- Business impact: Why this matters in revenue/traffic terms

- Current state: What’s happening now (with screenshots/data)

- Desired state: What should happen instead

- Acceptance criteria: How to verify the fix worked

- Technical implementation: Specific code changes or configuration updates required

- Testing requirements: How to QA the fix, including SEO regression tests

- Rollback plan: How to undo if the fix causes problems

- KPI tracking: Which metrics to monitor post-implementation

Implementation Timeline: 30-60-90 Day Remediation Plan

Days 1-30: Foundation and Quick Wins

- Complete audit delivery and stakeholder alignment

- Fix critical indexation issues (noindex on priority pages, robots.txt blocking)

- Implement HTML byte-order optimization on highest-traffic templates

- Deploy edge SEO fixes for redirects and header management

- Implement redirect fixes for broken high-traffic pages

- Deploy product schema on top-converting templates

- Set up monitoring dashboards and alerting via Reportz.io

- Configure CI/CD pre-deploy SEO validation

Expected outcomes: 5-10% indexation coverage improvement, 3-5% traffic recovery from redirect fixes, rich results eligibility for product pages

Days 31-60: Architecture and Performance

- Implement faceted navigation canonicalization strategy

- Remediate index bloat (remove low-value URLs from index)

- Fix hreflang implementation for international sites

- Address top 3 Core Web Vitals issues by template (LCP, INP, CLS)

- Optimize internal linking for strategic pages

- Deploy structured data across remaining templates

- Implement soft 404 detection and remediation

Expected outcomes: 15-25% crawl efficiency improvement, measurable Core Web Vitals improvement across target templates, 10-15% traffic recovery

Days 61-90: Advanced Optimization and Monitoring

- Complete site architecture improvements

- Implement advanced schema types (FAQ, HowTo, Review)

- Optimize remaining performance bottlenecks

- Deploy headless CMS SEO configuration fixes

- Establish ongoing monitoring and reporting cadence

- Document processes and train internal teams

- Run post-implementation audit to validate fixes and measure impact

Expected outcomes: 20-30% total traffic recovery across the 90-day period, sustainable performance improvements, internal team enablement for ongoing optimization

Measuring Success: KPIs That Matter

Crawl efficiency metrics: Crawl to index ratio improvement. Crawl waste reduction. Average crawl depth to priority pages. Render success rate for JavaScript-heavy pages. Time-to-index for new content.

Indexation health metrics: Total indexed pages vs. intended indexable pages. Index bloat ratio. Coverage issue resolution rate. Duplicate content reduction. Soft 404 elimination rate.

Performance metrics: Core Web Vitals percentile improvements (75th percentile). INP scores by template type. Mobile vs. desktop performance parity. Template-level LCP and CLS improvements. Third-party script load time reduction.

Business impact metrics: Organic traffic recovery percentage. Organic conversion rate improvement. Revenue attribution to technical fixes. SERP feature acquisition (rich results, featured snippets). AI platform visibility changes.

For teams looking to operationalize these audit insights into ongoing optimization, our content strategy services help translate technical fixes into sustainable content and optimization workflows.

When to Bring in Forensic Audit Expertise

You need an enterprise forensic audit when:

- You’re planning a migration (replatforming, domain change, URL restructure)

- Organic traffic has declined 15%+ without clear cause

- Your site has 10,000+ pages with complex architecture

- You’re operating in multiple countries/languages with hreflang

- You have JavaScript rendering or single-page application complexity

- Your crawl budget is constrained and you need to optimize allocation

- Your HTML templates exceed 1MB and you’re concerned about Google’s 2MB fetch limit

- You’ve received a manual action or algorithmic penalty

- You’re running a headless CMS architecture and need SEO validation

- You need executive-ready reporting to justify SEO investment

The ROI calculation is straightforward: if a 10% traffic recovery on a site generating $10M annually in organic revenue yields $1M, a comprehensive audit with 90-day implementation pays for itself many times over.

For agencies managing enterprise clients, our white label technical audit services provide the forensic depth your clients need under your brand.

Migration Protection Blueprint

Enterprise migrations (replatforming, domain changes, URL restructuring) are where technical audits prove their value most dramatically. A thorough pre-migration audit and post-migration validation can be the difference between a smooth transition and a 40% traffic loss.

Pre-migration audit requirements:

- Complete URL inventory, categorized by template type and business value

- Traffic and conversion baseline for every significant URL

- Detailed 1:1 redirect mapping of old URLs to new URLs

- Content parity verification ensuring critical elements survive the migration

- Technical feature inventory: structured data, hreflang, canonical tags, redirects

Migration protection checklist:

- Redirect accuracy testing: Validate every redirect points to the correct new URL (not homepage defaults)

- Redirect chain elimination: Ensure redirects are direct (old to new), not chained

- Status code verification: Confirm 301 permanent redirects, not 302 temporary

- Canonical tag migration: New URLs have proper self-referencing canonicals

- Hreflang migration: International sites maintain proper language-region targeting

- Structured data migration: Schema markup properly implemented on new templates

- HTML byte-order validation: New templates maintain critical SEO elements within the first portion of the HTML document

- Internal link updates: Internal links point to new URLs, not old redirecting URLs

- Sitemap updates: XML sitemaps contain new URLs, submitted to Search Console

- Robots.txt verification: No accidental blocking of important sections

- Analytics configuration: Tracking properly configured for new URL structure

Post-migration rollback criteria (define before migration):

- Organic traffic drop exceeding 15% for three consecutive days

- Indexation coverage drop exceeding 20%

- Conversion rate drop exceeding 25%

- Critical functionality failures (checkout, forms, key user paths)

- Core Web Vitals regression exceeding 30%

Having predefined rollback criteria and a tested rollback procedure reduces migration risk and stakeholder anxiety.

Frequently Asked Questions

How long does an enterprise technical SEO audit take?

A comprehensive forensic audit typically takes 3-4 weeks for sites with 50,000-500,000 pages. This includes data collection (1 week), analysis and diagnostics (1-2 weeks), and deliverable preparation (1 week). Larger sites or those with complex international implementations may require 5-6 weeks.

What’s the difference between a technical audit and a forensic audit?

A standard technical audit identifies surface-level issues using crawlers and automated tools. A forensic audit goes deeper: log file analysis segmented by bot type, JavaScript rendering validation against WRS behavior, HTML byte-level analysis against Google’s 2MB fetch limit, crawl budget optimization, index bloat diagnostics, and root cause diagnosis. Forensic audits include prioritization frameworks and sprint-ready implementation roadmaps.

Do I need server log access?

Yes, for a true forensic audit. Server logs reveal what search engines actually crawl versus what you think you’re serving. They show crawl budget allocation, orphaned pages, blocked resources, bot behavior patterns, and crawler segmentation by user agent. Without log access, you’re missing 30-40% of critical insights.

How do you prioritize hundreds of technical issues?

We use an Impact x Risk / Effort prioritization matrix. Each issue is scored on business impact, implementation risk, and development effort. This surfaces quick wins and critical risks while deprioritizing low-impact changes. The output is a sprint-ready backlog with clear owners and timelines.

What about Google’s 2MB HTML fetch limit?

Google confirmed in March 2026 that Googlebot fetches a maximum of 2MB per URL (including HTTP headers). Everything beyond that cutoff is ignored for indexation and rendering. Our audit now includes HTML weight analysis by template type, verifying that critical SEO elements (meta tags, canonicals, structured data) appear early in the document. External CSS and JavaScript files are fetched separately with their own limits, so moving heavy code to external files is a key optimization.

Can you audit sites built on headless CMS architectures?

Yes. Headless architectures (Contentful, Strapi, headless Shopify) require additional audit steps: tracing the content pipeline from CMS to CDN to rendered output, validating URL management across the frontend framework, and ensuring structured data and SEO signals aren’t lost in the decoupled architecture.

What tools do you use?

The audit combines specialized tools: Screaming Frog for crawling, Google Search Console and its API for indexation data, server log analyzers for crawl behavior, Lighthouse and CrUX for Core Web Vitals, schema validators for structured data, and custom scripts for log analysis and byte-level diagnostics. We use Reportz.io for automated reporting and monitoring dashboards with daily data updates from GA4, GSC, and Ahrefs.

What happens after the audit?

You receive a prioritized backlog with sprint-ready tickets, an executive summary with ROI projections, and implementation timelines. Most clients engage us for ongoing implementation support and monitoring. We also integrate fixes into CI/CD pipelines so SEO validation becomes part of your deployment workflow rather than an afterthought.

The Bottom Line

An enterprise technical SEO audit isn’t an expense. It’s risk mitigation and revenue protection. Every day you operate with crawl budget waste, indexation gaps, HTML bloat pushing critical signals past Google’s fetch limit, or Core Web Vitals issues dragging down your INP scores, you’re leaving traffic and revenue on the table.

The sites that dominate organic search won’t be the ones with the most content or the biggest budgets. They’ll be the ones with the cleanest technical foundation – optimized not just for how search engines evaluate content, but for how they physically fetch and process your bytes.

Your crawl budget is finite. Your engineering resources are constrained. Google’s 2MB fetch limit is real. The question isn’t whether to audit. It’s whether you’ll do it before or after your next traffic drop.

Ready to see what a forensic audit reveals about your enterprise site? Review how similar teams implemented these frameworks in our enterprise SEO case studies, or request a technical SEO audit consultation to discuss your specific challenges.

SEARCH

SEARCH